I’m a big fan of automating boring stuff on my computer. I’ve written programs in Python and JS to put orders on online shopping website, transfer data between multiple excel files and extract information from PDFs etc. etc. In the year of 2020, it’s almost guaranteed that you can find a Python library that offers full/partial access to the APIs of the specific program you’d like to automate – Selenium supports multiple languages for webpage automation/testing; pywinauto is good for automating native Windows apps; openpyxl allows you to read&write excel files without even installing MS Excel (no Macro support yet), and the list goes on. Despite the ease of use, these libraries are tied to the specific tasks they were designed to work with and therefore barely allow cross-program automation or general-purpose automation. Complex programs that intentionally close their APIs, i.e. video games, also leave little space for these libraries to be utilized. Image-based automation method, which gathers information directly from user screen, imitates how human-beings process information and thus is a promising approach for general-purpose automation.

I’ve been playing an MMORPG called Albion Online for few months. It features a player-driven economy system where most in-game items are produced, traded and consumed by players with very limited intervention from the game itself. One way to earn game currency is by farming crops. Players can buy seeds from the market, sow the seeds on their personal islands, water the seedlings, harvest them next day and then sell on the market to make money. It’s quite a tedious daily task so I challenged myself to write a Python program that automates this process.

Disclaimer

Using automation script violates the terms of service of the game (specifically term 13.3 No Manipulation). This article does not share any snippet of the automation script, nor promote using such script in game. The sole purpose of writing this article is to share my findings when implementing image-based automation script to a complex computer program.

Please do not contact me for acquiring this script, because

- the script is written in such a way that it only works on my own computer

- I have no interest in gaining benefit from selling it

- I enjoy the game and I don’t wish the in-game economy system to be disrupted

- I will highly suspect that you work for SBI (game dev) and try to obtain my game information so that you can ban my account

I’ll try my best to answer any technical questions you may have, but nothing beyond that.

Why Image-Based?

Because other approaches won’t work. Here’s what the script is expected to do in sequence:

- open the chest near the south entrance on my island

- equip my character with a bag

- transfer seeds from the chest to the bag

- move the character to the first farm near the north end of the island

- harvest products by clicking nine product icons in sequence and click the “harvest” button every time the confirmation window pops up

- select correct seed icon from bag/inventory, click “place” button and click the right position on the farmland

- click on each seedling (placed seed) and click “water” button every time the confirmation window pops up

- move to the rest four farms in sequence and repeat the harvest-sow-water (5-7) actions

- move back to the chest and store all products

- un-equip bag and store it in the chest.

Using script to trade in a player-driven market seems like a dangerous move to me, so I choose to manually purchase seeds and store them in a chest on my island. As for selling the products, I do that manually as well for the same reason.

Just by inspecting the above activities, one may suggest using a simple macro repeater which records all mouse&keyboard activities in one instance and plays them in the next harvest cycle. Well, there are at least two reasons why a macro repeater can’t do the job.

For one, there are some randomly generated rabbits running around on the island. Most mouse clicking actions recorded by a macro repeater intend to move the character to a desired position, but when repeated, these actions can land on one or more of these rabbits, so that the character would go and hunt down the rabbits instead of moving to the right position, immediately invalidating all the following mouse/keyboard actions. The other reason comes from the performance of any kind of online games. The communication between the client end and the server end isn’t always stable and that’s why lags occur from time to time when playing online games. Macro repeater doesn’t allow any lag in game because that will offset or even cancel mouse actions which will then lead to complete failure of the program.

For the script to work, it has to handle both the input and the output sides, or in others words, how the script gathers information (current character state, location, item position etc.) from the game and how it sends commands/actions to the game to control the character. The output side is easy to solve because there are multiple Python libraries that can send mouse&keyboard actions when sufficient position information is given. I’m using pyautogui for that purpose. While on the input side, the game uses an anti-cheat software which prevents third-party program from hijacking the game packets or modifying game data in RAM. Even if we are willing to take the risk of being caught by the anti-cheat software, most packets sent&received by the game, especially those containing critical position information, are encrypted (A recent Reddit post states that the packets are actually in plain text, but when I tried to look at the packets they are all encoded to say the least. I’m sure there are people who have much better knowledge about packets sniffing and they might have more direct ways in obtaining game data), and I haven’t found a way to easily obtain those information from RAM using Python. Image-based solution seems to be the only one I can rely on. The idea is that the script would “look at the screen” and figure out where the character is, where the needed items are, where the action buttons are etc. Based on the gathered information, it will think about the next action to execute and send corresponding mouse/keyboard commands.

Development Environment

The script runs on a 5-year-old laptop with an entry-level i7 CPU and an entry-level NVIDIA GPU plus a 128G SSD. It’s not the most powerful computer you might’ve seen and as a fact it can barely reach 60 FPS under high video settings in the game. To make sure that the script can run without much lagging, I adjusted the resolution of the game to the lowest setting which is a huge compromise considering that every action of the script is based on clear image. Nonetheless, I was surprised that the laptop can run both the game and the script at the same time.

Cross-Correlation

Before we get into the details of the script, it might help to understand the concept behind image-matching. I’m using a python library called imagesearch which offers very simple APIs to do image-search. The author of the library also wanted to automate a game so he built a Python wrapper around opencv2 and pyautogui. Here are the tutorial and the documentation of the library:

- https://steemit.com/gaming/@howo/how-i-made-my-own-python-bot-to-automate-complex-games

- https://brokencode.io/how-to-easily-image-search-with-python/

The core method to find the matching point is called cross-correlation. You may find its Wikipedia page helpful .

Cross-correlation of two (1-dimensional) signals generates another (1-dimensional) signal that represents the similarity of the two signals at various offsets. Cross-correlation of two (2-dimensional) images results in another (2-dimensional) image which is the “similarity heat map” of the two images. As for the two images to be compared, one comes directly from your computer screen, the other is the sample image you are searching for. On the “heat map”, the location of the highest-valued pixel is where the sample image most likely to appear on your screen, and the value of that pixel is how similar the sample image is to that region of your screen. Forgive my wordy explanation, let’s jump straight to the conclusion, there are 3 most important inputs to the image-search function, namely a screenshot of the game, a sample image and a precision score that sets the threshold of the similarity score. If the returned highest similarity score is lower than the precision score, no result will be returned by the program. Every time I use this image-search function in the script, the precision score always needs to be calibrated to reach the best result.

Screenshot Efficiency

In order to achieve short response time, each screenshot needs to be taken efficiently. When I started to work on this, I used one of the most popular Python screenshot library called pyscreenshot. Not long after I wrote the first test script, I realized that it took too long to capture the screen and the script couldn’t smoothly control the character. I could’ve switched to a computer with higher computing power but I don’t have the budget so I started to try other screenshot libraries hoping to find a more efficient one. What I found interesting in the end was that different screenshot libraries actually take different lengths of time to capture screen. I’m not sure why some libraries have better performance than the others since they are basically doing the same job. The most efficient screenshot Python library I found is called Python-MSS.

The figure above shows the time and the average CPU usage of both pyscreenshot and Python-MSS when both of them are asked to capture the screen 100 times and save to a variable. It takes 0.35 seconds for pyscreenshot to capture one frame on my laptop while it only takes 0.09 seconds for Python-MSS to do the same task. Python-MSS is almost 4 times faster than pyscreenshot while only consumes 5% more CPU, bringing a huge improvement for script performance.

Navigation

Getting to know where the character is currently at is the most complex task in the script and about 70% of the development time was spent on building a navigation system. The game itself doesn’t provide coordinate data, like longitude and latitude, or any other form of numeric representation of character’s current location.

One possible solution to obtain the current location information is to look for landmarks and find the relative location of the character with respect to the landmarks. This method may work great for 2D games but Albion is a 2.5D game and objects change shapes when camera moves.

The gate shown in the above figures is the entrance gate to the island near its south end. Notice how the shape of the gate changes when the character moves from the front to the back of the gate. Despite being subtle, this change in shape is enough to confuse the image-search function and it’s nearly impossible to find a sample image that allows the script to recognize the gate in both cases. The light condition also changes in game over time. The game scene changes from daylight to moonlight and back to daylight every hour in real life, which in my opinion is a stunning design. I love spending time staring at the reflection of stars in water during in-game nighttime, it’s such a relief from the intense PvP battles. This change of light condition also makes direct image-search useless.

AI is certainly a viable solution when dealing with this type of object recognition, but it requires too much computing power. A top-notch GPU can barely process AI object recognition at 30FPS with optimized settings. It also requires a huge amount of labelled pictures to train the model, which is not worth my time. All in all, AI is an overkill.

SIFT-matching might work well in this situation. OpenCV even provides SIFT-matching functions in Python. I didn’t consider this approach when I wrote the script but I also suspect that it would take considerable amount of computing power which my humble laptop doesn’t possess. Might give it try in my next gaming script.

Luckily, the game has a diamond-shaped mini-map at the bottom-right corner with an arrow-shaped icon to represent the current location that looks like this:

The mini-map gives critical location information with respect to the island so I decided to take advantage of it. The blue arrow that shows the character location changes direction once the character change direction, and therefore I couldn’t use image-search function to directly obtain the location of the blue arrow (which is shown as red arrow in the figure below…).

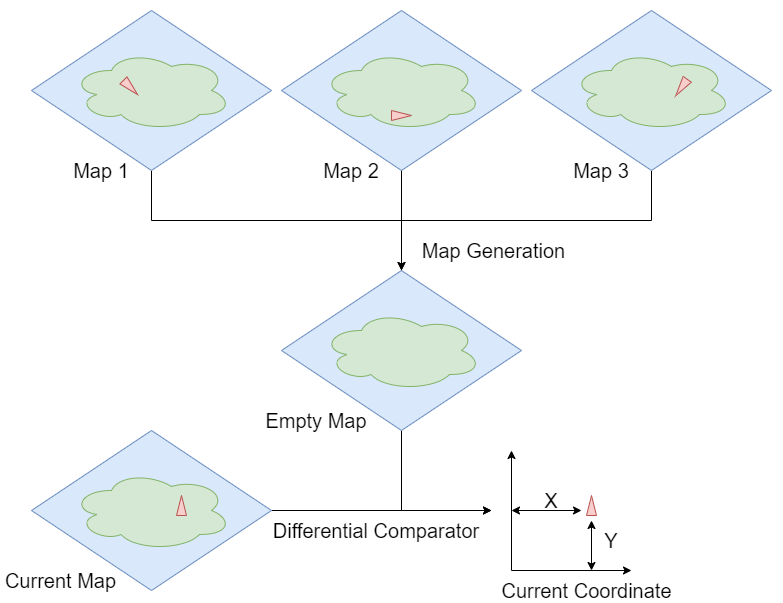

To utilize the mini-map, I need an empty mini-map as background so I wrote another program to generate such empty map. Three mini-maps were captured with the character standing at various locations far from each other. These three maps went through a pixel-wise comparison and a new map was generated in such a way that each pixel of the new map must have identical pixels on at least two of the three source maps. The new map is the empty mini-map with no blue arrow on it. From there, the current mini-map on the screen is captured and compared with the empty map, and the difference between the two maps are the pixels of the blue arrow. Notice that this blue arrow is direction-dependent and the mean of these locations (vectors) doesn’t point to the exact location of the character on the mini-map. This issue takes another few steps to solve and I’m not gonna discuss the details on this. One thing I’d like to put on note is that high resolution settings help a lot for this task since we are dealing with really small images while trying to obtain very accurate information.

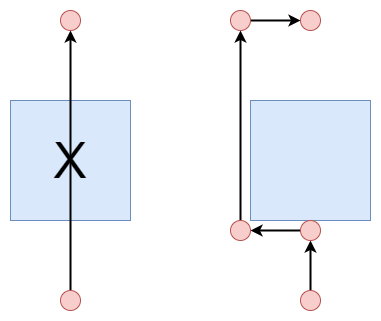

Once we know the coordinate of the arrow on mini-map (A) and the destination coordinate on mini-map (B), a vector pointing from A to B can be obtained. Re-position this vector so that it starts from the character’s feet on screen. Where the vector is pointing now is where the mouse should click to move the character. This operation is looped until the arrow’s position overlaps with destination position.

That’s not the end of the story. Right now the navigation system can only lead the character to move in a straight line and obstacles on this line can easily block the way. Multiple “bridge points” around each obstacle are used to guide the character to bypass the obstacle.

Notification System

Problems can happen from nowhere, this is especially true for online games. It is necessary to have a notification system that sends me alert whenever there’s an issue with the script. I have an Android phone and I’ve used few notification apps that have APIs for Python. Unfortunately, most of them are shut down and the rest now charge money per use. I spent some time searching but couldn’t find a good & free platform that can push notifications to my phone. But then I realized that I don’t really need a notification platform – any SNS with open API will work. It didn’t take too long to find a Python library called fbchat that can login to Facebook and send message, so my farming bot now sends message to me like this (apparently I never keep enough wheat seeds in the crate):

Miscellaneous

You are a really good reader if you can reach here, just bear with me for another few notes I’d like to share.

- Keep in mind that unpredictable errors can occur from time to time due to lagging or bug of game, so it’s a good idea to track the progress and save it to another file on disk. I use openpyxl to read&write data into excel files.

- The script sends mouse&keyboard command multiple times per second, this is dangerous because when it encounters problems you would have a hard time to stop the script – there’s simply not enough time for you to move the cursor to the red cross and click. There’s an interrupt setting in pyautogui called pyautogui.FAILSAFE. Setting it to TRUE will turn on FAILSAFE mode. Whenever the cursor moves to the top-left region of the screen, the script will stop.

- It’s a good habit to write a confirmation function immediately after action function to check if the action has actually been executed. When automating a complex program, one should never assume that each line of the code will run as planned.

How to Avoid Such Automation Scripts as A Game Developer?

You and your team are developing a game and you absolutely hate it when people try to automate the game, how should you prevent it from happening? You can try to build a robust anti-cheat program that constantly monitors suspicious actions from any other program, but this will inevitably consume a huge chunk of computing power and slow down the game, leading to terrible player experience. Or you can make it hard to automate by adding a human-verification pop-up before any important in-game action takes place, but this will bring players more frowns and script writers more fun. The best way to prevent any kind of automating, in my humble opinion, is to make the boring parts of the game entertaining. Take farming in Albion Online as an example, it’s just seem too boring to sow, water and harvest crops on a quiet island every single day in this PvP game. How about changing the game mechanism so that you can invade other players’ islands and steal their crops. You might end up in a fight with the island owner, and if he wins the fight, you are forced to do farming work for him in the next few days and you won’t have the opportunity to invade others’ islands, unless of course, you are willing to pay a hefty fee to bail yourself out of this slavery… Hmm, wish I could be a game designer one day.

Thanks for reading and hope you have found some notes here useful!

[qrcode]

Managed to make it work via combining C# and AutoHotKey, I’m pretty bad at utilizing python libraries to be honest, simple tree/stone farming in my island

If it works, it works. Did you use any CV or image cognition? I wonder how reliable the script goes.